SUSE Linux Enterprise Server 12 SP5

Storage Administration

Guide

Storage Administration Guide

SUSE Linux Enterprise Server 12 SP5

Provides information about how to manage storage devices on a SUSE Linux Enter-

prise Server.

Publication Date: September27,2024

https://documentation.suse.com

Copyright © 2006–2024 SUSE LLC and contributors. All rights reserved.

Permission is granted to copy, distribute and/or modify this document under the terms of the GNU Free Docu-

mentation License, Version 1.2 or (at your option) version 1.3; with the Invariant Section being this copyright

notice and license. A copy of the license version 1.2 is included in the section entitled “GNU Free Documentation

License”.

For SUSE trademarks, see https://www.suse.com/company/legal/ . All third-party trademarks are the property

of their respective owners. Trademark symbols (®, ™ etc.) denote trademarks of SUSE and its affiliates. Asterisks

(*) denote third-party trademarks.

All information found in this book has been compiled with utmost attention to detail. However, this does not

guarantee complete accuracy. Neither SUSE LLC, its affiliates, the authors nor the translators shall be held liable

for possible errors or the consequences thereof.

Contents

About This Guide xiii

1 Available documentation xiii

2 Improving the documentation xiv

3 Documentation conventions xv

4 Support xvii

Support statement for SUSE Linux Enterprise Server xvii • Technology

previews xviii

I FILE SYSTEMS AND MOUNTING 1

1 Overview of File Systems in Linux 2

1.1 Terminology 3

1.2 Btrfs 3

Key Features 4 • The Root File System Setup on SUSE Linux

Enterprise Server 4 • Migration from Ext and ReiserFS File Systems

to Btrfs 9 • Btrfs Administration 10 • Btrfs Quota Support for

Subvolumes 10 • Btrfs send/receive 11 • Data Deduplication

Support 15 • Deleting Subvolumes from the Root File System 16

1.3 XFS 17

XFS formats 18

1.4 Ext2 19

1.5 Ext3 20

Easy and Highly Reliable Upgrades from Ext2 20 • Reliability and

Performance 20 • Converting an Ext2 File System into Ext3 21 • Ext3

File System Inode Size and Number of Inodes 21

1.6 Ext4 26

1.7 ReiserFS 26

iv Storage Administration Guide

1.8 Other Supported File Systems 27

1.9 Large File Support in Linux 28

1.10 Linux Kernel Storage Limitations 30

1.11 Troubleshooting File Systems 30

Btrfs Error: No space is left on device 30 • Freeing Unused File

System Blocks 32 • Btrfs: Balancing Data across Devices 33 • No

Defragmentation on SSDs 34

1.12 Additional Information 34

2 Resizing File Systems 35

2.1 Use Cases 35

2.2 Guidelines for Resizing 35

File Systems that Support Resizing 36 • Increasing the Size of a File

System 36 • Decreasing the Size of a File System 37

2.3 Changing the Size of a Btrfs File System 37

2.4 Changing the Size of an XFS File System 38

2.5 Changing the Size of an Ext2, Ext3, or Ext4 File System 39

2.6 Changing the Size of a Reiser File System 40

3 Using UUIDs to Mount Devices 41

3.1 Persistent Device Names with udev 41

3.2 Understanding UUIDs 41

3.3 Additional Information 42

3.4 Mounting network storage devices 42

4 Multi-tier Caching for Block Device Operations 43

4.1 General Terminology 43

4.2 Caching Modes 44

v Storage Administration Guide

4.3 bcache 45

Main Features 45 • Setting Up a bcache Device 45 • bcache

Configuration Using sysfs 47

4.4 lvmcache 47

Configuring lvmcache 48 • Removing a Cache Pool 49

II LOGICAL VOLUMES (LVM) 51

5 LVM Configuration 52

5.1 Understanding the Logical Volume Manager 52

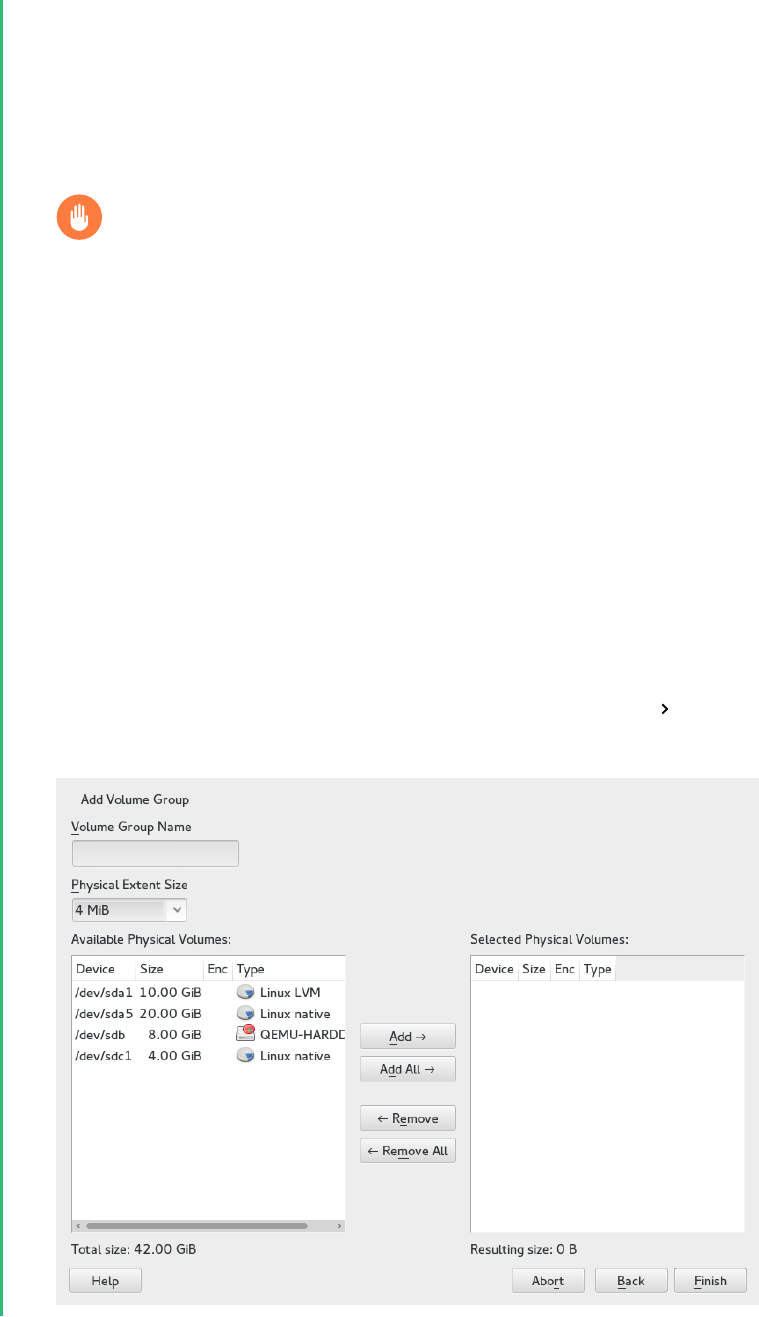

5.2 Creating Volume Groups 54

5.3 Creating Logical Volumes 57

Thinly Provisioned Logical Volumes 60 • Creating Mirrored Volumes 61

5.4 Automatically Activating Non-Root LVM Volume Groups 62

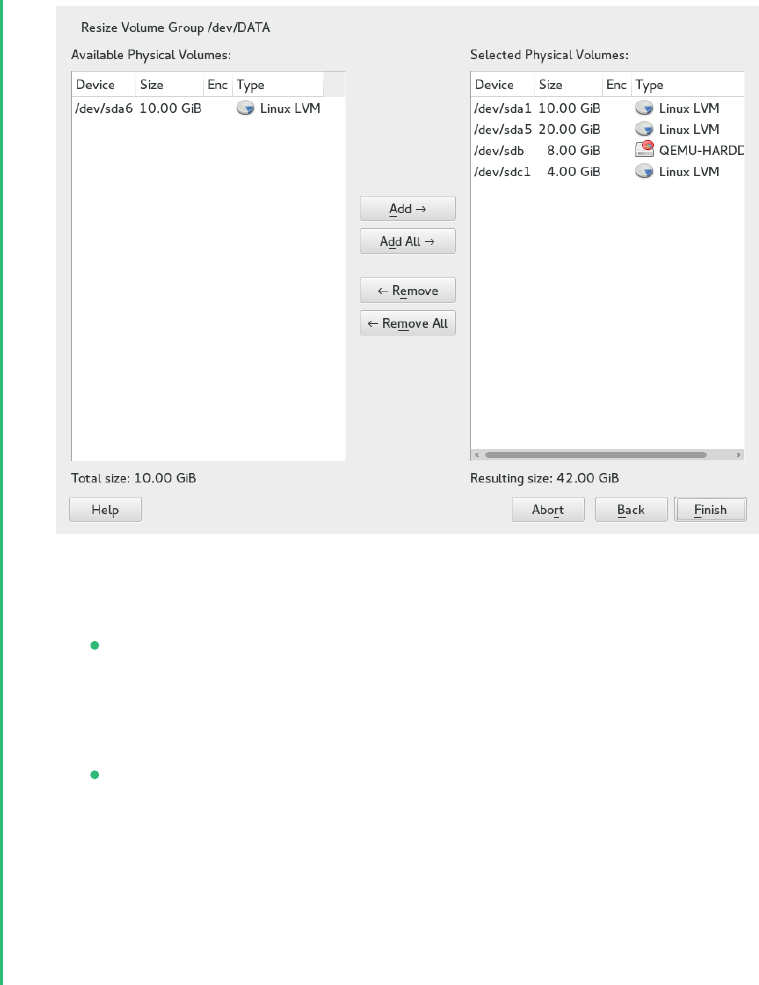

5.5 Resizing an Existing Volume Group 63

5.6 Resizing a Logical Volume 64

5.7 Deleting a Volume Group or a Logical Volume 66

5.8 Using LVM Commands 67

Resizing a Logical Volume with Commands 70 • Dynamic Aggregation of LVM

Metadata via lvmetad 72 • Using LVM Cache Volumes 73

5.9 Tagging LVM2 Storage Objects 74

Using LVM2 Tags 74 • Requirements for Creating LVM2

Tags 75 • Command Line Tag Syntax 75 • Configuration

File Syntax 76 • Using Tags for a Simple Activation Control in a

Cluster 77 • Using Tags to Activate On Preferred Hosts in a Cluster 78

6 LVM Volume Snapshots 81

6.1 Understanding Volume Snapshots 81

6.2 Creating Linux Snapshots with LVM 83

6.3 Monitoring a Snapshot 83

vi Storage Administration Guide

6.4 Deleting Linux Snapshots 84

6.5 Using Snapshots for Virtual Machines on a Virtual Host 84

6.6 Merging a Snapshot with the Source Logical Volume to Revert Changes

or Roll Back to a Previous State 86

III SOFTWARE RAID 89

7 Software RAID Configuration 90

7.1 Understanding RAID Levels 90

RAID0 90 • RAID1 91 • RAID2 and

RAID3 91 • RAID4 91 • RAID5 91 • RAID6 92 • Nested and

Complex RAID Levels 92

7.2 Soft RAID Configuration with YaST 92

RAID Names 95

7.3 Troubleshooting Software RAIDs 96

Recovery after Failing Disk is Back Again 96

7.4 For More Information 97

8 Configuring Software RAID for the Root Partition 98

8.1 Prerequisites for Using a Software RAID Device for the Root

Partition 98

8.2 Setting Up the System with a Software RAID Device for the Root (/)

Partition 99

9 Creating Software RAID10 Devices 103

9.1 Creating Nested RAID 10 Devices with mdadm 103

Creating Nested RAID 10 (1+0) with mdadm 104 • Creating Nested RAID 10

(0+1) with mdadm 106

9.2 Creating a Complex RAID 10 108

Number of Devices and Replicas in the Complex

RAID10 109 • Layout 110 • Creating a Complex RAID10 with the YaST

Partitioner 112 • Creating a Complex RAID 10 with mdadm 115

vii Storage Administration Guide

10 Creating a Degraded RAID Array 118

11 Resizing Software RAID Arrays with mdadm 120

11.1 Increasing the Size of a Software RAID 121

Increasing the Size of Component Partitions 122 • Increasing the Size of the

RAID Array 123 • Increasing the Size of the File System 124

11.2 Decreasing the Size of a Software RAID 125

Decreasing the Size of the File System 125 • Decreasing the Size of the RAID

Array 125 • Decreasing the Size of Component Partitions 126

12 Storage Enclosure LED Utilities for MD Software

RAIDs 129

12.1 The Storage Enclosure LED Monitor Service 130

12.2 The Storage Enclosure LED Control Application 131

Pattern Names 132 • List of Devices 135 • Examples 136

12.3 Additional Information 136

IV NETWORK STORAGE 137

13 iSNS for Linux 138

13.1 How iSNS Works 138

13.2 Installing iSNS Server for Linux 140

13.3 Configuring iSNS Discovery Domains 142

Creating iSNS Discovery Domains 142 • Adding iSCSI Nodes to a Discovery

Domain 143

13.4 Starting the iSNS Service 145

13.5 For More Information 145

14 Mass Storage over IP Networks: iSCSI 146

14.1 Installing the iSCSI LIO Target Server and iSCSI Initiator 147

viii Storage Administration Guide

14.2 Setting Up an iSCSI LIO Target Server 148

iSCSI LIO Target Service Start-up and Firewall Settings 148 • Configuring

Authentication for Discovery of iSCSI LIO Targets and

Initiators 149 • Preparing the Storage Space 151 • Setting Up an iSCSI LIO

Target Group 152 • Modifying an iSCSI LIO Target Group 156 • Deleting an

iSCSI LIO Target Group 156

14.3 Configuring iSCSI Initiator 157

Using YaST for the iSCSI Initiator Configuration 157 • Setting Up the iSCSI

Initiator Manually 160 • The iSCSI Initiator Databases 161

14.4 Using iSCSI Disks when Installing 163

14.5 Troubleshooting iSCSI 163

Portal Error When Setting Up Target LUNs on an iSCSI LIO Target

Server 163 • iSCSI LIO Targets Are Not Visible from Other

Computers 164 • Data Packets Dropped for iSCSI Traffic 164 • Using iSCSI

Volumes with LVM 164 • iSCSI Targets Are Mounted When the Configuration

File Is Set to Manual 165

14.6 iSCSI LIO Target Terminology 165

14.7 Additional Information 167

15 Fibre Channel Storage over Ethernet Networks:

FCoE 168

15.1 Configuring FCoE Interfaces during the Installation 169

15.2 Installing FCoE and the YaST FCoE Client 170

15.3 Managing FCoE Services with YaST 171

15.4 Configuring FCoE with Commands 174

15.5 Managing FCoE Instances with the FCoE Administration Tool 175

15.6 Additional Information 177

16 NVMe-oF 179

16.1 Overview 179

ix Storage Administration Guide

16.2 Setting Up an NVMe-oF Host 179

Installing Command Line Client 179 • Discovering NVMe-oF

Targets 180 • Connecting to NVMe-oF Targets 180 • Multipathing 181

16.3 Setting Up an NVMe-oF Target 181

Installing Command Line Client 181 • Configuration Steps 181 • Back Up

and Restore Target Configuration 183

16.4 Special Hardware Configuration 184

Overview 184 • Broadcom 184 • Marvell 184

16.5 More Information 185

17 Managing multipath I/O for devices 186

17.1 Understanding multipath I/O 186

Multipath terminology 186

17.2 Hardware support 188

Multipath implementations: device mapper and NVMe 188 • Storage array

autodetection for multipathing 188 • Storage arrays that require specific

hardware handlers 189

17.3 Planning for multipathing 189

Prerequisites 189 • Multipath installation types 190 • Disk management

tasks 191 • Software RAID and complex storage stacks 191 • High-

availability solutions 192

17.4 Installing SUSE Linux Enterprise Server on multipath systems 192

Installing without connected multipath devices 192 • Installing with

connected multipath devices 193

17.5 Updating SLE on multipath systems 194

17.6 Multipath management tools 194

Device mapper multipath module 195 • The multipathd

daemon 196 • The multipath command 199 • SCSI persistent

reservations and mpathpersist 200

x Storage Administration Guide

17.7 Configuring the system for multipathing 201

Enabling, starting, and stopping multipath services 201 • Preparing

SAN devices for multipathing 203 • Partitions on multipath devices and

kpartx 203 • Keeping the initramfs synchronized 204

17.8 Multipath configuration 206

Creating /etc/multipath.conf 206 • multipath.conf

syntax 206 • multipath.conf sections 208 • Applying multipath.conf

modifications 209

17.9 Configuring policies for failover, queuing, and failback 210

Queuing policy on stand-alone servers 213 • Queuing policy on clustered

servers 213

17.10 Configuring path grouping and priorities 214

17.11 Selecting devices for multipathing 217

The blacklist section in multipath.conf 217 • The blacklist

exceptions section in multipath.conf 218 • Other options affecting device

selection 219

17.12 Multipath device names and WWIDs 220

WWIDs and device Identification 221 • Setting aliases for multipath

maps 221 • Using autogenerated user-friendly names 222 • Referring to

multipath maps 223

17.13 Miscellaneous options 224

Handling unreliable (“marginal”) path devices 226

17.14 Best practice 227

Best practices for configuration 227 • Interpreting multipath I/O

status 228 • Using LVM2 on multipath devices 229 • Resolving stalled I/

O 229 • MD RAID on multipath devices 230 • Scanning for new devices

without rebooting 230

17.15 Troubleshooting MPIO 231

Understanding device selection issues 231 • Understanding device

referencing issues 232 • Troubleshooting steps in emergency

mode 233 • Technical information documents 236

xi Storage Administration Guide

About This Guide

This guide provides information about how to manage storage devices on SUSE Linux Enterprise

Server 12 SP5. For information about partitioning and managing devices, see Book “Deployment

Guide”, Chapter13 “Advanced Disk Setup”. This guide is intended for system administrators.

1 Available documentation

Online documentation

Our documentation is available online at https://documentation.suse.com . Browse or

download the documentation in various formats.

Note: Latest updates

The latest updates are usually available in the English-language version of this doc-

umentation.

SUSE Knowledgebase

If you have run into an issue, also check out the Technical Information Documents (TIDs)

that are available online at https://www.suse.com/support/kb/ . Search the SUSE Knowl-

edgebase for known solutions driven by customer need.

Release notes

For release notes, see https://www.suse.com/releasenotes/ .

In your system

For offline use, the release notes are also available under /usr/share/doc/re-

lease-notes on your system. The documentation for individual packages is available at

/usr/share/doc/packages .

Many commands are also described in their manual pages. To view them, run man , followed

by a specific command name. If the man command is not installed on your system, install

it with sudo zypper install man .

xiii Available documentation SLES 12 SP5

2 Improving the documentation

Your feedback and contributions to this documentation are welcome. The following channels

for giving feedback are available:

Service requests and support

For services and support options available for your product, see https://www.suse.com/

support/ .

To open a service request, you need a SUSE subscription registered at SUSE Customer

Center. Go to https://scc.suse.com/support/requests , log in, and click Create New.

Bug reports

Report issues with the documentation at https://bugzilla.suse.com/ .

To simplify this process, click the Report an issue icon next to a headline in the HTML

version of this document. This preselects the right product and category in Bugzilla and

adds a link to the current section. You can start typing your bug report right away.

A Bugzilla account is required.

Contributions

To contribute to this documentation, click the Edit source document icon next to a headline

in the HTML version of this document. This will take you to the source code on GitHub,

where you can open a pull request.

A GitHub account is required.

Note: Edit source document only available for English

The Edit source document icons are only available for the English version of each

document. For all other languages, use the Report an issue icons instead.

For more information about the documentation environment used for this documentation,

see the repository's README.

Mail

You can also report errors and send feedback concerning the documentation to doc-

of the document. Additionally, include the relevant section number and title (or provide

the URL) and provide a concise description of the problem.

xiv Improving the documentation SLES 12 SP5

3 Documentation conventions

The following notices and typographic conventions are used in this document:

/etc/passwd : Directory names and le names

PLACEHOLDER : Replace PLACEHOLDER with the actual value

PATH : An environment variable

ls , --help : Commands, options, and parameters

user : The name of a user or group

package_name : The name of a software package

Alt

,

Alt

–

F1

: A key to press or a key combination. Keys are shown in uppercase as

on a keyboard.

File, File Save As: menu items, buttons

AMD/Intel

This paragraph is only relevant for the AMD64/Intel 64 architectures. The

arrows mark the beginning and the end of the text block.

IBM Z, POWER

This paragraph is only relevant for the architectures IBM Z and POWER .

The arrows mark the beginning and the end of the text block.

Chapter 1, “Example chapter”: A cross-reference to another chapter in this guide.

Commands that must be run with root privileges. You can also prefix these commands

with the sudo command to run them as a non-privileged user:

root # command

tux > sudo command

Commands that can be run by non-privileged users:

tux > command

Commands can be split into two or multiple lines by a backslash character ( \ ) at the end

of a line. The backslash informs the shell that the command invocation will continue after

the line's end:

tux > echo a b \

xv Documentation conventions SLES 12 SP5

c d

A code block that shows both the command (preceded by a prompt) and the respective

output returned by the shell:

tux > command

output

Notices

Warning: Warning notice

Vital information you must be aware of before proceeding. Warns you about security

issues, potential loss of data, damage to hardware, or physical hazards.

Important: Important notice

Important information you should be aware of before proceeding.

Note: Note notice

Additional information, for example about differences in software versions.

Tip: Tip notice

Helpful information, like a guideline or a piece of practical advice.

Compact Notices

Additional information, for example about differences in software versions.

Helpful information, like a guideline or a piece of practical advice.

xvi Documentation conventions SLES 12 SP5

4 Support

Find the support statement for SUSE Linux Enterprise Server and general information about

technology previews below. For details about the product lifecycle, see https://www.suse.com/

lifecycle .

If you are entitled to support, nd details on how to collect information for a support ticket at

https://documentation.suse.com/sles-15/html/SLES-all/cha-adm-support.html .

4.1 Support statement for SUSE Linux Enterprise Server

To receive support, you need an appropriate subscription with SUSE. To view the specific support

offers available to you, go to https://www.suse.com/support/ and select your product.

The support levels are defined as follows:

L1

Problem determination, which means technical support designed to provide compatibility

information, usage support, ongoing maintenance, information gathering and basic trou-

bleshooting using available documentation.

L2

Problem isolation, which means technical support designed to analyze data, reproduce

customer problems, isolate a problem area and provide a resolution for problems not re-

solved by Level1 or prepare for Level3.

L3

Problem resolution, which means technical support designed to resolve problems by en-

gaging engineering to resolve product defects which have been identified by Level2 Sup-

port.

For contracted customers and partners, SUSE Linux Enterprise Server is delivered with L3 sup-

port for all packages, except for the following:

Technology previews.

Sound, graphics, fonts, and artwork.

Packages that require an additional customer contract.

xvii Support SLES 12 SP5

Some packages shipped as part of the module Workstation Extension are L2-supported only.

Packages with names ending in -devel (containing header les and similar developer

resources) will only be supported together with their main packages.

SUSE will only support the usage of original packages. That is, packages that are unchanged

and not recompiled.

4.2 Technology previews

Technology previews are packages, stacks, or features delivered by SUSE to provide glimpses

into upcoming innovations. Technology previews are included for your convenience to give you

a chance to test new technologies within your environment. We would appreciate your feedback.

If you test a technology preview, please contact your SUSE representative and let them know

about your experience and use cases. Your input is helpful for future development.

Technology previews have the following limitations:

Technology previews are still in development. Therefore, they may be functionally incom-

plete, unstable, or otherwise not suitable for production use.

Technology previews are not supported.

Technology previews may only be available for specific hardware architectures.

Details and functionality of technology previews are subject to change. As a result, up-

grading to subsequent releases of a technology preview may be impossible and require a

fresh installation.

SUSE may discover that a preview does not meet customer or market needs, or does not

comply with enterprise standards. Technology previews can be removed from a product

at any time. SUSE does not commit to providing a supported version of such technologies

in the future.

For an overview of technology previews shipped with your product, see the release notes at

https://www.suse.com/releasenotes .

xviii Technology previews SLES 12 SP5

1 Overview of File Systems in Linux

SUSE Linux Enterprise Server ships with different le systems from which to

choose, including Btrfs, Ext4, Ext3, Ext2, ReiserFS and XFS. Each le system has its

own advantages and disadvantages. For a side-by-side feature comparison of the

major le systems in SUSE Linux Enterprise Server, see https://www.suse.com/re-

leasenotes/x86_64/SUSE-SLES/12-SP5/#TechInfo.Filesystems (File System Support

and Sizes). This chapter contains an overview of how these le systems work and

what advantages they offer.

With SUSE Linux Enterprise 12, Btrfs is the default le system for the operating system and XFS

is the default for all other use cases. SUSE also continues to support the Ext family of le systems,

ReiserFS and OCFS2. By default, the Btrfs le system will be set up with subvolumes. Snapshots

will be automatically enabled for the root le system using the snapper infrastructure. For more

information about snapper, refer to Book “Administration Guide”, Chapter7 “System Recovery and

Snapshot Management with Snapper”.

Professional high-performance setups might require a highly available storage system. To meet

the requirements of high-performance clustering scenarios, SUSE Linux Enterprise Server in-

cludes OCFS2 (Oracle Cluster File System 2) and the Distributed Replicated Block Device (DRBD)

in the High Availability add-on. These advanced storage systems are not covered in this guide.

For information, see the SUSE Linux Enterprise High Availability Administration Guide at http://

www.suse.com/doc .

It is very important to remember that no le system best suits all kinds of applications. Each

le system has its particular strengths and weaknesses, which must be taken into account. In

addition, even the most sophisticated le system cannot replace a reasonable backup strategy.

The terms data integrity and data consistency, when used in this section, do not refer to the

consistency of the user space data (the data your application writes to its les). Whether this

data is consistent must be controlled by the application itself.

Unless stated otherwise in this section, all the steps required to set up or change partitions and

le systems can be performed by using the YaST Partitioner (which is also strongly recommend-

ed). For information, see Book “Deployment Guide”, Chapter13 “Advanced Disk Setup”.

2 SLES 12 SP5

1.1 Terminology

metadata

A data structure that is internal to the le system. It ensures that all of the on-disk data

is properly organized and accessible. Essentially, it is “data about the data.” Almost every

le system has its own structure of metadata, which is one reason the le systems show

different performance characteristics. It is extremely important to maintain metadata in-

tact, because otherwise all data on the le system could become inaccessible.

inode

A data structure on a le system that contains a variety of information about a le, includ-

ing size, number of links, pointers to the disk blocks where the le contents are actually

stored, and date and time of creation, modification, and access.

journal

In the context of a le system, a journal is an on-disk structure containing a type of log

in which the le system stores what it is about to change in the le system’s metadata.

Journaling greatly reduces the recovery time of a le system because it has no need for

the lengthy search process that checks the entire le system at system start-up. Instead,

only the journal is replayed.

1.2 Btrfs

Btrfs is a copy-on-write (COW) le system developed by Chris Mason. It is based on COW-friendly

B-trees developed by Ohad Rodeh. Btrfs is a logging-style le system. Instead of journaling the

block changes, it writes them in a new location, then links the change in. Until the last write,

the new changes are not committed.

3 Terminology SLES 12 SP5

1.2.1 Key Features

Btrfs provides fault tolerance, repair, and easy management features, such as the following:

Writable snapshots that allow you to easily roll back your system if needed after applying

updates, or to back up les.

Subvolume support: Btrfs creates a default subvolume in its assigned pool of space. It allows

you to create additional subvolumes that act as individual le systems within the same

pool of space. The number of subvolumes is limited only by the space allocated to the pool.

The online check and repair functionality scrub is available as part of the Btrfs command

line tools. It verifies the integrity of data and metadata, assuming the tree structure is ne.

You can run scrub periodically on a mounted le system; it runs as a background process

during normal operation.

Different RAID levels for metadata and user data.

Different checksums for metadata and user data to improve error detection.

Integration with Linux Logical Volume Manager (LVM) storage objects.

Integration with the YaST Partitioner and AutoYaST on SUSE Linux Enterprise Server. This

also includes creating a Btrfs le system on Multiple Devices (MD) and Device Mapper

(DM) storage configurations.

Offline migration from existing Ext2, Ext3, and Ext4 le systems.

Boot loader support for /boot , allowing to boot from a Btrfs partition.

Multivolume Btrfs is supported in RAID0, RAID1, and RAID10 profiles in SUSE Linux En-

terprise Server 12 SP5. Higher RAID levels are not supported yet, but might be enabled

with a future service pack.

Use Btrfs commands to set up transparent compression.

1.2.2 The Root File System Setup on SUSE Linux Enterprise Server

By default, SUSE Linux Enterprise Server is set up using Btrfs and snapshots for the root par-

tition. Snapshots allow you to easily roll back your system if needed after applying updates,

or to back up les. Snapshots can easily be managed with the SUSE Snapper infrastructure as

4 Key Features SLES 12 SP5

explained in Book “Administration Guide”, Chapter7 “System Recovery and Snapshot Management with

Snapper”. For general information about the SUSE Snapper project, see the Snapper Portal wiki

at OpenSUSE.org (http://snapper.io ).

When using a snapshot to roll back the system, it must be ensured that data such as user's home

directories, Web and FTP server contents or log les do not get lost or overwritten during a roll

back. This is achieved by using Btrfs subvolumes on the root le system. Subvolumes can be

excluded from snapshots. The default root le system setup on SUSE Linux Enterprise Server as

proposed by YaST during the installation contains the following subvolumes. They are excluded

from snapshots for the reasons given below.

/boot/grub2/i386-pc , /boot/grub2/x86_64-efi , /boot/grub2/powerpc-ieee1275 , /

boot/grub2/s390x-emu

A rollback of the boot loader configuration is not supported. The directories listed above

are architecture-specific. The rst two directories are present on AMD64/Intel 64 ma-

chines, the latter two on IBM POWER and on IBM IBMZ, respectively.

/home

If /home does not reside on a separate partition, it is excluded to avoid data loss on roll-

backs.

/opt , /var/opt

Third-party products usually get installed to /opt . It is excluded to avoid uninstalling

these applications on rollbacks.

/srv

Contains data for Web and FTP servers. It is excluded to avoid data loss on rollbacks.

/tmp , /var/tmp , /var/cache , /var/crash

All directories containing temporary les and caches are excluded from snapshots.

/usr/local

This directory is used when manually installing software. It is excluded to avoid unin-

stalling these installations on rollbacks.

/var/lib/libvirt/images

The default location for virtual machine images managed with libvirt. Excluded to ensure

virtual machine images are not replaced with older versions during a rollback. By default,

this subvolume is created with the option no copy on write .

5 The Root File System Setup on SUSE Linux Enterprise Server SLES 12 SP5

/var/lib/mailman , /var/spool

Directories containing mails or mail queues are excluded to avoid a loss of mails after a

rollback.

/var/lib/named

Contains zone data for the DNS server. Excluded from snapshots to ensure a name server

can operate after a rollback.

/var/lib/mariadb , /var/lib/mysql , /var/lib/pgqsl

These directories contain database data. By default, these subvolumes are created with the

option no copy on write .

/var/log

Log le location. Excluded from snapshots to allow log le analysis after the rollback of

a broken system. By default, /var/log has the NoCOW attribute set, disabling copy-on-

write, which improves performance and reduces the number of duplicate blocks. Verify

with lsattr :

tux > lsattr -l /var/

/var/log No_COW

Warning: Support for Rollbacks

Rollbacks are only supported by the SUSE support if you do not remove any of the pre-

configured subvolumes. You may, however, add additional subvolumes using the YaST

Partitioner.

1.2.2.1 Mounting Compressed Btrfs File Systems

Note: GRUB2 and LZO Compressed Root

GRUB2 cannot read an lzo compressed root. You need a separate /boot partition to

use compression.

Since SLE12 SP1, compression for Btrfs le systems is supported. Use the compress or com-

press-force option and select the compression algorithm, lzo or zlib (the default). The zlib

compression has a higher compression ratio while lzo is faster and takes less CPU load.

6 The Root File System Setup on SUSE Linux Enterprise Server SLES 12 SP5

For example:

root # mount -o compress /dev/sdx /mnt

In case you create a le, write to it, and the compressed result is greater or equal to the uncom-

pressed size, Btrfs will skip compression for future write operations forever for this le. If you

do not like this behavior, use the compress-force option. This can be useful for les that have

some initial non-compressable data.

Note, compression takes effect for new les only. Files that were written without compression

are not compressed when the le system is mounted with the compress or compress-force

option. Furthermore, les with the nodatacow attribute never get their extents compressed:

root # chattr +C FILE

root # mount -o nodatacow /dev/sdx /mnt

In regard to encryption, this is independent from any compression. After you have written some

data to this partition, print the details:

root # btrfs filesystem show /mnt

btrfs filesystem show /mnt

Label: 'Test-Btrfs' uuid: 62f0c378-e93e-4aa1-9532-93c6b780749d

Total devices 1 FS bytes used 3.22MiB

devid 1 size 2.00GiB used 240.62MiB path /dev/sdb1

If you want this to be permanent, add the compress or compress-force option into the /

etc/fstab configuration le. For example:

UUID=1a2b3c4d /home btrfs subvol=@/home,compress 0 0

1.2.2.2 Mounting Subvolumes

A system rollback from a snapshot on SUSE Linux Enterprise Server is performed by booting

from the snapshot rst. This allows you to check the snapshot while running before doing the

rollback. Being able to boot from snapshots is achieved by mounting the subvolumes (which

would normally not be necessary).

In addition to the subvolumes listed in Section 1.2.2, “The Root File System Setup on SUSE Linux

Enterprise Server” a volume named @ exists. This is the default subvolume that will be mounted

as the root partition ( / ). The other subvolumes will be mounted into this volume.

When booting from a snapshot, not the @ subvolume will be used, but rather the snapshot. The

parts of the le system included in the snapshot will be mounted read-only as / . The other

subvolumes will be mounted writable into the snapshot. This state is temporary by default: the

7 The Root File System Setup on SUSE Linux Enterprise Server SLES 12 SP5

previous configuration will be restored with the next reboot. To make it permanent, execute the

snapper rollback command. This will make the snapshot that is currently booted the new

default subvolume, which will be used after a reboot.

1.2.2.3 Checking for Free Space

File system usage is usually checked by running the df command. On a Btrfs le system, the

output of df can be misleading, because in addition to the space the raw data allocates, a Btrfs

le system also allocates and uses space for metadata.

Consequently a Btrfs le system may report being out of space even though it seems that plenty

of space is still available. In that case, all space allocated for the metadata is used up. Use the

following commands to check for used and available space on a Btrfs le system:

btrfs filesystem show

tux > sudo btrfs filesystem show /

Label: 'ROOT' uuid: 52011c5e-5711-42d8-8c50-718a005ec4b3

Total devices 1 FS bytes used 10.02GiB

devid 1 size 20.02GiB used 13.78GiB path /dev/sda3

Shows the total size of the le system and its usage. If these two values in the last line

match, all space on the le system has been allocated.

btrfs filesystem df

tux > sudo btrfs filesystem df /

Data, single: total=13.00GiB, used=9.61GiB

System, single: total=32.00MiB, used=16.00KiB

Metadata, single: total=768.00MiB, used=421.36MiB

GlobalReserve, single: total=144.00MiB, used=0.00B

Shows values for allocated ( total ) and used space of the le system. If the values for

total and used for the metadata are almost equal, all space for metadata has been

allocated.

btrfs filesystem usage

tux > sudo btrfs filesystem usage /

Overall:

Device size: 20.02GiB

Device allocated: 13.78GiB

Device unallocated: 6.24GiB

Device missing: 0.00B

Used: 10.02GiB

8 The Root File System Setup on SUSE Linux Enterprise Server SLES 12 SP5

Free (estimated): 9.63GiB (min: 9.63GiB)

Data ratio: 1.00

Metadata ratio: 1.00

Global reserve: 144.00MiB (used: 0.00B)

Data Metadata System

Id Path single single single Unallocated

-- --------- -------- --------- -------- -----------

1 /dev/sda3 13.00GiB 768.00MiB 32.00MiB 6.24GiB

-- --------- -------- --------- -------- -----------

Total 13.00GiB 768.00MiB 32.00MiB 6.24GiB

Used 9.61GiB 421.36MiB 16.00KiB

Shows data similar to that of the two previous commands combined.

For more information refer to man 8 btrfs-filesystem and https://btrfs.wiki.kernel.org/in-

dex.php/FAQ .

1.2.3 Migration from Ext and ReiserFS File Systems to Btrfs

You can migrate data volumes from existing Ext (Ext2, Ext3, or Ext4) or ReiserFS to the Btrfs

le system. The conversion process occurs offline and in place on the device. The le system

needs at least 15% of available free space on the device.

To convert the le system to Btrfs, take the le system offline, then enter:

sudo btrfs-convert DEVICE

To roll back the migration to the original le system, take the le system offline, then enter:

sudo btrfs-convert -r DEVICE

Warning: Root File System Conversion not Supported

Converting the root le system to Btrfs is not supported. Either keep the existing le

system or re-install the whole system from scratch.

Important: Possible Loss of Data

When rolling back to the original le system, all data will be lost that you added after

the conversion to Btrfs. That is, only the original data is converted back to the previous

le system.

9 Migration from Ext and ReiserFS File Systems to Btrfs SLES 12 SP5

1.2.4 Btrfs Administration

Btrfs is integrated in the YaST Partitioner and AutoYaST. It is available during the installation

to allow you to set up a solution for the root le system. You can use the YaST Partitioner after

the installation to view and manage Btrfs volumes.

Btrfs administration tools are provided in the btrfsprogs package. For information about using

Btrfs commands, see the man 8 btrfs , man 8 btrfsck , and man 8 mkfs.btrfs commands.

For information about Btrfs features, see the Btrfs wiki at http://btrfs.wiki.kernel.org .

1.2.5 Btrfs Quota Support for Subvolumes

The Btrfs root le system subvolumes /var/log , /var/crash and /var/cache can use all of

the available disk space during normal operation, and cause a system malfunction. To help avoid

this situation, SUSE Linux Enterprise Server now offers Btrfs quota support for subvolumes. If

you set up the root le system by using the respective YaST proposal, it is prepared accordingly:

quota groups ( qgroup ) for all subvolumes are already set up. To set a quota for a subvolume

in the root le system, proceed as follows:

Note: Btrfs Quota Groups Can Incur Degraded Performance

On SUSE Linux Enterprise Server 12 SP5, using Btrfs quota groups can degrade le system

performance.

1.

Enable quota support:

sudo btrfs quota enable /

2.

Get a list of subvolumes:

sudo btrfs subvolume list /

Quotas can only be set for existing subvolumes.

3.

Set a quota for one of the subvolumes that was listed in the previous step. A subvolume

can either be identified by path (for example /var/tmp ) or by 0/SUBVOLUME ID (for

example 0/272 ). The following example sets a quota of ve GB for /var/tmp .

sudo btrfs qgroup limit 5G /var/tmp

10 Btrfs Administration SLES 12 SP5

The size can either be specified in bytes (5000000000), kilobytes (5000000K), megabytes

(5000M), or gigabytes (5G). The resulting values in bytes slightly differ, since 1024 Bytes

= 1 KiB, 1024 KiB = 1 MiB, etc.

4.

To list the existing quotas, use the following command. The column max_rfer shows the

quota in bytes.

sudo btrfs qgroup show -r /

Tip: Nullifying a Quota

In case you want to nullify an existing quota, set a quota size of none :

sudo btrfs qgroup limit none /var/tmp

To disable quota support for a partition and all its subvolumes, use btrfs quota dis-

able :

sudo btrfs quota disable /

See the man 8 btrfs-qgroup and man 8 btrfs-quota for more details. The UseCases page

on the Btrfs wiki (https://btrfs.wiki.kernel.org/index.php/UseCases ) also provides more infor-

mation.

1.2.6 Btrfs send/receive

Btrfs allows to make snapshots to capture the state of the le system. Snapper, for example, uses

this feature to create snapshots before and after system changes, allowing a rollback. However,

together with the send/receive feature, snapshots can also be used to create and maintain copies

of a le system in a remote location. This feature can, for example, be used to do incremental

backups.

A btrfs send operation calculates the difference between two read-only snapshots from the

same subvolume and sends it to a le or to STDOUT. A Btrfs receive operation takes the

result of the send command and applies it to a snapshot.

11 Btrfs send/receive SLES 12 SP5

1.2.6.1 Prerequisites

To use Btrfs's send/receive feature, the following requirements need to be met:

A Btrfs le system is required on the source side ( send ) and on the target side ( receive ).

Btrfs send/receive operates on snapshots, therefore the respective data needs to reside in

a Btrfs subvolume.

Snapshots on the source side need to be read-only.

SUSE Linux Enterprise 12 SP2 or better. Earlier versions of SUSE Linux Enterprise do not

support send/receive.

1.2.6.2 Incremental Backups

The following procedure shows the basic usage of Btrfs send/receive using the example of cre-

ating incremental backups of /data (source side) in /backup/data (target side). /data needs

to be a subvolume.

PROCEDURE1.1:INITIAL SETUP

1.

Create the initial snapshot (called snapshot_0 in this example) on the source side and

make sure it is written to the disk:

sudo btrfs subvolume snapshot -r /data /data/bkp_data

sync

A new subvolume /data/bkp_data is created. It will be used as the basis for the next

incremental backup and should be kept as a reference.

2.

Send the initial snapshot to the target side. Since this is the initial send/receive operation,

the complete snapshot needs to be sent:

sudo bash -c 'btrfs send /data/bkp_data | btrfs receive /backup'

A new subvolume /backup/bkp_data is created on the target side.

12 Btrfs send/receive SLES 12 SP5

When the initial setup has been finished, you can create incremental backups and send the

differences between the current and previous snapshots to the target side. The procedure is

always the same:

1.

Create a new snapshot on the source side.

2.

Send the differences to the target side.

3.

Optional: Rename and/or clean up snapshots on both sides.

PROCEDURE1.2:PERFORMING AN INCREMENTAL BACKUP

1.

Create a new snapshot on the source side and make sure it is written to the disk. In the

following example the snapshot is named bkp_data_ CURRENT_DATE :

sudo btrfs subvolume snapshot -r /data /data/bkp_data_$(date +%F)

sync

A new subvolume, for example /data/bkp_data_2016-07-07 , is created.

2.

Send the difference between the previous snapshot and the one you have created to

the target side. This is achieved by specifying the previous snapshot with the option -

p SNAPSHOT .

sudo bash -c 'btrfs send -p /data/bkp_data /data/bkp_data_2016-07-07 \

| btrfs receive /backup'

A new subvolume /backup/bkp_data_2016-07-07 is created.

3.

As a result four snapshots, two on each side, exist:

/data/bkp_data

/data/bkp_data_2016-07-07

/backup/bkp_data

/backup/bkp_data_2016-07-07

13 Btrfs send/receive SLES 12 SP5

Now you have three options for how to proceed:

Keep all snapshots on both sides. With this option you can roll back to any snapshot

on both sides while having all data duplicated at the same time. No further action

is required. When doing the next incremental backup, keep in mind to use the next-

to-last snapshot as parent for the send operation.

Only keep the last snapshot on the source side and all snapshots on the target side.

Also allows to roll back to any snapshot on both sides—to do a rollback to a specific

snapshot on the source side, perform a send/receive operation of a complete snapshot

from the target side to the source side. Do a delete/move operation on the source

side.

Only keep the last snapshot on both sides. This way you have a backup on the target

side that represents the state of the last snapshot made on the source side. It is not

possible to roll back to other snapshots. Do a delete/move operation on the source

and the target side.

a.

To only keep the last snapshot on the source side, perform the following commands:

sudo btrfs subvolume delete /data/bkp_data

sudo mv /data/bkp_data_2016-07-07 /data/bkp_data

The rst command will delete the previous snapshot, the second command renames

the current snapshot to /data/bkp_data . This ensures that the last snapshot that

was backed up is always named /data/bkp_data . As a consequence, you can also

always use this subvolume name as a parent for the incremental send operation.

b.

To only keep the last snapshot on the target side, perform the following commands:

sudo btrfs subvolume delete /backup/bkp_data

sudo mv /backup/bkp_data_2016-07-07 /backup/bkp_data

The rst command will delete the previous backup snapshot, the second command

renames the current backup snapshot to /backup/bkp_data . This ensures that the

latest backup snapshot is always named /backup/bkp_data .

14 Btrfs send/receive SLES 12 SP5

Tip: Sending to a Remote Target Side

To send the snapshots to a remote machine, use SSH:

btrfs send /data/bkp_data | ssh [email protected] 'btrfs receive /backup'

1.2.7 Data Deduplication Support

Btrfs supports data deduplication by replacing identical blocks in the le system with logical

links to a single copy of the block in a common storage location. SUSE Linux Enterprise Server

provides the tool duperemove for scanning the le system for identical blocks. When used on

a Btrfs le system, it can also be used to deduplicate these blocks. duperemove is not installed

by default. To make it available, install the package duperemove .

Note: Use Cases

As of SUSE Linux Enterprise Server 12 SP5 duperemove is not suited to deduplicate the

entire le system. It is intended to be used to deduplicate a set of 10 to 50 large les that

possibly have lots of blocks in common, such as virtual machine images.

duperemove can either operate on a list of les or recursively scan a directory:

sudo duperemove OPTIONS file1 file2 file3

sudo duperemove -r OPTIONS directory

It operates in two modes: read-only and de-duping. When run in read-only mode (that is without

the -d switch), it scans the given les or directories for duplicated blocks and prints them. This

works on any le system.

Running duperemove in de-duping mode is only supported on Btrfs le systems. After having

scanned the given les or directories, the duplicated blocks will be submitted for deduplication.

For more information see man 8 duperemove .

15 Data Deduplication Support SLES 12 SP5

1.2.8 Deleting Subvolumes from the Root File System

You may need to delete one of the default Btrfs subvolumes from the root le system for specific

purposes. One of them is transforming a subvolume—for example @/home or @/srv —into a le

system on a separate device. The following procedure illustrates how to delete a Btrfs subvolume:

1.

Identify the subvolume you need to delete (for example @/opt ). Notice that the root path

has always subvolume ID '5'.

tux > sudo btrfs subvolume list /

ID 256 gen 30 top level 5 path @

ID 258 gen 887 top level 256 path @/var

ID 259 gen 872 top level 256 path @/usr/local

ID 260 gen 886 top level 256 path @/tmp

ID 261 gen 60 top level 256 path @/srv

ID 262 gen 886 top level 256 path @/root

ID 263 gen 39 top level 256 path @/opt

[...]

2.

Find the device name that hosts the root partition:

tux > sudo btrfs device usage /

/dev/sda1, ID: 1

Device size: 23.00GiB

Device slack: 0.00B

Data,single: 7.01GiB

Metadata,DUP: 1.00GiB

System,DUP: 16.00MiB

Unallocated: 14.98GiB

3.

Mount the root le system (subvolume with ID 5) on a separate mount point (for example

/mnt ):

tux > sudo mount -o subvolid=5 /dev/sda1 /mnt

4.

Delete the @/opt partition from the mounted root le system:

tux > sudo btrfs subvolume delete /mnt/@/opt

5.

Unmount the previously mounted root le system:

tux > sudo umount /mnt

16 Deleting Subvolumes from the Root File System SLES 12 SP5

1.3 XFS

Originally intended as the le system for their IRIX OS, SGI started XFS development in the early

1990s. The idea behind XFS was to create a high-performance 64-bit journaling le system to

meet extreme computing challenges. XFS is very good at manipulating large les and performs

well on high-end hardware. XFS is the default le system for data partitions in SUSE Linux

Enterprise Server.

A quick review of XFS’s key features explains why it might prove to be a strong competitor for

other journaling le systems in high-end computing.

High scalability

XFS offers high scalability by using allocation groups

At the creation time of an XFS le system, the block device underlying the le system is

divided into eight or more linear regions of equal size. Those are called allocation groups.

Each allocation group manages its own inodes and free disk space. Practically, allocation

groups can be seen as le systems in a le system. Because allocation groups are rather

independent of each other, more than one of them can be addressed by the kernel simul-

taneously. This feature is the key to XFS’s great scalability. Naturally, the concept of inde-

pendent allocation groups suits the needs of multiprocessor systems.

High performance

XFS offers high performance through efficient management of disk space

Free space and inodes are handled by B

+

trees inside the allocation groups. The use of B

+

trees greatly contributes to XFS’s performance and scalability. XFS uses delayed allocation,

which handles allocation by breaking the process into two pieces. A pending transaction is

stored in RAM and the appropriate amount of space is reserved. XFS still does not decide

where exactly (in le system blocks) the data should be stored. This decision is delayed

until the last possible moment. Some short-lived temporary data might never make its way

to disk, because it is obsolete by the time XFS decides where actually to save it. In this way,

XFS increases write performance and reduces le system fragmentation. Because delayed

allocation results in less frequent write events than in other le systems, it is likely that

data loss after a crash during a write is more severe.

Preallocation to avoid file system fragmentation

Before writing the data to the le system, XFS reserves (preallocates) the free space needed

for a le. Thus, le system fragmentation is greatly reduced. Performance is increased

because the contents of a le are not distributed all over the le system.

17 XFS SLES 12 SP5

1.3.1 XFS formats

SUSE Linux Enterprise Server supports the “on-disk format” (v5) of the XFS le system. The

main advantages of this format are automatic checksums of all XFS metadata, le type support,

and support for a larger number of access control lists for a le.

Note that this format is not supported by SUSE Linux Enterprise kernels older than version

3.12, by xfsprogs older than version 3.2.0, and GRUB2 versions released before SUSE Linux

Enterprise 12.

Important: The V4 is deprecated

XFS is deprecating le systems with the V4 format. This le system format was created

by the command:

mkfs.xfs -m crc=0 DEVICE

The format was used in SLE 11 and older releases and currently it creates a warning

message by dmesg :

Deprecated V4 format (crc=0) will not be supported after September 2030

If you see the message above in the output of the dmesg command, it is recommended

that you update your le system to the V5 format:

1.

Back up your data to another device.

2.

Create the le system on the device.

mkfs.xfs -m crc=1 DEVICE

3.

Restore the data from the backup on the updated device.

18 XFS formats SLES 12 SP5

1.4 Ext2

The origins of Ext2 go back to the early days of Linux history. Its predecessor, the Extended File

System, was implemented in April 1992 and integrated in Linux 0.96c. The Extended File System

underwent several modifications and, as Ext2, became the most popular Linux le system for

years. With the creation of journaling le systems and their short recovery times, Ext2 became

less important.

A brief summary of Ext2’s strengths might help understand why it was—and in some areas still

is—the favorite Linux le system of many Linux users.

Solidity and Speed

Being an “old-timer”, Ext2 underwent many improvements and was heavily tested. This

might be the reason people often refer to it as rock-solid. After a system outage when the

le system could not be cleanly unmounted, e2fsck starts to analyze the le system data.

Metadata is brought into a consistent state and pending les or data blocks are written to

a designated directory (called lost+found ). In contrast to journaling le systems, e2fsck

analyzes the entire le system and not only the recently modified bits of metadata. This

takes significantly longer than checking the log data of a journaling le system. Depending

on le system size, this procedure can take half an hour or more. Therefore, it is not

desirable to choose Ext2 for any server that needs high availability. However, because

Ext2 does not maintain a journal and uses less memory, it is sometimes faster than other

le systems.

Easy Upgradability

Because Ext3 is based on the Ext2 code and shares its on-disk format and its metadata

format, upgrades from Ext2 to Ext3 are very easy.

19 Ext2 SLES 12 SP5

1.5 Ext3

Ext3 was designed by Stephen Tweedie. Unlike all other next-generation le systems, Ext3 does

not follow a completely new design principle. It is based on Ext2. These two le systems are

very closely related to each other. An Ext3 le system can be easily built on top of an Ext2 le

system. The most important difference between Ext2 and Ext3 is that Ext3 supports journaling.

In summary, Ext3 has three major advantages to offer:

1.5.1 Easy and Highly Reliable Upgrades from Ext2

The code for Ext2 is the strong foundation on which Ext3 could become a highly acclaimed

next-generation le system. Its reliability and solidity are elegantly combined in Ext3 with the

advantages of a journaling le system. Unlike transitions to other journaling le systems, such

as ReiserFS or XFS, which can be quite tedious (making backups of the entire le system and re-

creating it from scratch), a transition to Ext3 is a matter of minutes. It is also very safe, because

re-creating an entire le system from scratch might not work flawlessly. Considering the number

of existing Ext2 systems that await an upgrade to a journaling le system, you can easily see

why Ext3 might be of some importance to many system administrators. Downgrading from Ext3

to Ext2 is as easy as the upgrade. Perform a clean unmount of the Ext3 le system and remount

it as an Ext2 le system.

1.5.2 Reliability and Performance

Some other journaling le systems follow the “metadata-only” journaling approach. This means

your metadata is always kept in a consistent state, but this cannot be automatically guaranteed

for the le system data itself. Ext3 is designed to take care of both metadata and data. The degree

of “care” can be customized. Enabling Ext3 in the data=journal mode offers maximum security

(data integrity), but can slow down the system because both metadata and data are journaled.

A relatively new approach is to use the data=ordered mode, which ensures both data and

metadata integrity, but uses journaling only for metadata. The le system driver collects all data

blocks that correspond to one metadata update. These data blocks are written to disk before

the metadata is updated. As a result, consistency is achieved for metadata and data without

sacrificing performance. A third option to use is data=writeback , which allows data to be

written to the main le system after its metadata has been committed to the journal. This option

20 Ext3 SLES 12 SP5

is often considered the best in performance. It can, however, allow old data to reappear in

les after crash and recovery while internal le system integrity is maintained. Ext3 uses the

data=ordered option as the default.

1.5.3 Converting an Ext2 File System into Ext3

To convert an Ext2 le system to Ext3:

1.

Create an Ext3 journal by running tune2fs -j as the root user.

This creates an Ext3 journal with the default parameters.

To specify how large the journal should be and on which device it should reside, run

tune2fs -J instead together with the desired journal options size= and device= . More

information about the tune2fs program is available in the tune2fs man page.

2.

Edit the le /etc/fstab as the root user to change the le system type specified for the

corresponding partition from ext2 to ext3 , then save the changes.

This ensures that the Ext3 le system is recognized as such. The change takes effect after

the next reboot.

3.

To boot a root le system that is set up as an Ext3 partition, add the modules ext3 and

jbd in the initrd . Do so by

a.

opening or creating /etc/dracut.conf.d/10-filesystem.conf and adding the

following line (mind the leading whitespace):

force_drivers+=" ext3 jbd"

b.

and running the dracut -f command.

4.

Reboot the system.

1.5.4 Ext3 File System Inode Size and Number of Inodes

An inode stores information about the le and its block location in the le system. To allow

space in the inode for extended attributes and ACLs, the default inode size for Ext3 was increased

from 128 bytes on SLES 10 to 256 bytes on SLES 11. As compared to SLES 10, when you make a

new Ext3 le system on SLES 11, the default amount of space preallocated for the same number

21 Converting an Ext2 File System into Ext3 SLES 12 SP5

of inodes is doubled, and the usable space for les in the le system is reduced by that amount.

Thus, you must use larger partitions to accommodate the same number of inodes and les than

were possible for an Ext3 le system on SLES 10.

When you create a new Ext3 le system, the space in the inode table is preallocated for the total

number of inodes that can be created. The bytes-per-inode ratio and the size of the le system

determine how many inodes are possible. When the le system is made, an inode is created for

every bytes-per-inode bytes of space:

number of inodes = total size of the file system divided by the number of bytes per inode

The number of inodes controls the number of les you can have in the le system: one inode for

each le. To address the increased inode size and reduced usable space available, the default for

the bytes-per-inode ratio was increased from 8192 bytes on SLES 10 to 16384 bytes on SLES 11.

The doubled ratio means that the number of les that can be created is one-half of the number

of les possible for an Ext3 le system on SLES 10.

Important: Changing the Inode Size of an Existing Ext3 File

System

After the inodes are allocated, you cannot change the settings for the inode size or bytes-

per-inode ratio. No new inodes are possible without re-creating the le system with dif-

ferent settings, or unless the le system gets extended. When you exceed the maximum

number of inodes, no new les can be created on the le system until some les are

deleted.

When you make a new Ext3 le system, you can specify the inode size and bytes-per-inode ratio

to control inode space usage and the number of les possible on the le system. If the blocks

size, inode size, and bytes-per-inode ratio values are not specified, the default values in the /

etc/mked2fs.conf le are applied. For information, see the mke2fs.conf(5) man page.

22 Ext3 File System Inode Size and Number of Inodes SLES 12 SP5

Use the following guidelines:

Inode size: The default inode size is 256 bytes. Specify a value in bytes that is a power

of 2 and equal to 128 or larger in bytes and up to the block size, such as 128, 256, 512,

and so on. Use 128 bytes only if you do not use extended attributes or ACLs on your Ext3

le systems.

Bytes-per-inode ratio: The default bytes-per-inode ratio is 16384 bytes. Valid bytes-per-

inode ratio values must be a power of 2 equal to 1024 or greater in bytes, such as 1024,

2048, 4096, 8192, 16384, 32768, and so on. This value should not be smaller than the

block size of the le system, because the block size is the smallest chunk of space used to

store data. The default block size for the Ext3 le system is 4 KB.

In addition, you should consider the number of les and the size of les you need to store.

For example, if your le system will have many small les, you can specify a smaller bytes-

per-inode ratio, which increases the number of inodes. If your le system will have very

large les, you can specify a larger bytes-per-inode ratio, which reduces the number of

possible inodes.

Generally, it is better to have too many inodes than to run out of them. If you have too

few inodes and very small les, you could reach the maximum number of les on a disk

that is practically empty. If you have too many inodes and very large les, you might have

free space reported but be unable to use it because you cannot create new les in space

reserved for inodes.

If you do not use extended attributes or ACLs on your Ext3 le systems, you can restore the

SLES 10 behavior specifying 128 bytes as the inode size and 8192 bytes as the bytes-per-inode

ratio when you make the le system. Use any of the following methods to set the inode size

and bytes-per-inode ratio:

Modifying the default settings for all new Ext3 files: In a text editor, modify the defaults

section of the /etc/mke2fs.conf le to set the inode_size and inode_ratio to the

desired default values. The values apply to all new Ext3 le systems. For example:

blocksize = 4096

inode_size = 128

23 Ext3 File System Inode Size and Number of Inodes SLES 12 SP5

inode_ratio = 8192

At the command line: Pass the inode size ( -I 128 ) and the bytes-per-inode ratio ( -i

8192 ) to the mkfs.ext3(8) command or the mke2fs(8) command when you create a

new Ext3 le system. For example, use either of the following commands:

sudo mkfs.ext3 -b 4096 -i 8092 -I 128 /dev/sda2

sudo mke2fs -t ext3 -b 4096 -i 8192 -I 128 /dev/sda2

During installation with YaST: Pass the inode size and bytes-per-inode ratio values when

you create a new Ext3 le system during the installation. In the YaST Partitioner on the

Edit Partition page under Formatting Options, select Format partitionExt3, then click Options.

In the File system options dialog, select the desired values from the Block Size in Bytes, Bytes-

per-inode, and Inode Size drop-down box.

For example, select 4096 for the Block Size in Bytes drop-down box, select 8192 from the

Bytes per inode drop-down box, select 128 from the Inode Size drop-down box, then click OK.

24 Ext3 File System Inode Size and Number of Inodes SLES 12 SP5

During installation with AutoYaST: In an AutoYaST profile, you can use the fs_options

tag to set the opt_bytes_per_inode ratio value of 8192 for -i and the opt_inode_den-

sity value of 128 for -I:

<partitioning config:type="list">

<drive>

<device>/dev/sda</device>

<initialize config:type="boolean">true</initialize>

<partitions config:type="list">

<partition>

<filesystem config:type="symbol">ext3</filesystem>

<format config:type="boolean">true</format>

25 Ext3 File System Inode Size and Number of Inodes SLES 12 SP5

<fs_options>

<opt_bytes_per_inode>

<option_str>-i</option_str>

<option_value>8192</option_value>

</opt_bytes_per_inode>

<opt_inode_density>

<option_str>-I</option_str>

<option_value>128</option_value>

</opt_inode_density>

</fs_options>

<mount>/</mount>

<partition_id config:type="integer">131</partition_id>

<partition_type>primary</partition_type>

<size>25G</size>

</partition>

</partitions>

</drive>

<partitioning>

For information, see https://www.suse.com/support/kb/doc.php?id=7009075 (SLES11 ext3 par-

titions can only store 50% of the les that can be stored on SLES10 [Technical Information Docu-

ment 7009075]).

1.6 Ext4

In 2006, Ext4 started as a fork from Ext3. It eliminates some storage limitations of Ext3 by sup-

porting volumes with a size of up to 1 exbibyte, les with a size of up to 16 tebibytes and an

unlimited number of subdirectories. It also introduces several performance enhancements such

as delayed block allocation and a much faster le system checking routine. Ext4 is also more re-

liable by supporting journal checksums and by providing time stamps measured in nanoseconds.

Ext4 is fully backward compatible to Ext2 and Ext3—both le systems can be mounted as Ext4.

1.7 ReiserFS

Officially one of the key features of the 2.4 kernel release, ReiserFS has been available as a

kernel patch for 2.2.x SUSE kernels since version 6.4. ReiserFS was designed by Hans Reiser

and the Namesys development team. It has proven itself to be a powerful alternative to Ext2.

Its key assets are better disk space usage, better disk access performance, faster crash recovery,

and reliability through data journaling.

26 Ext4 SLES 12 SP5

Important: Support of ReiserFS in SUSE Linux Enterprise Server

12

Existing ReiserFS partitions are supported for the lifetime of SUSE Linux Enterprise Server

12 specifically for migration purposes. Support for creating new ReiserFS le systems has

been removed starting with SUSE Linux Enterprise Server 12.

1.8 Other Supported File Systems

Table1.1, “File System Types in Linux” summarizes some other le systems supported by Linux. They

are supported mainly to ensure compatibility and interchange of data with different kinds of

media or foreign operating systems.

TABLE1.1:FILE SYSTEM TYPES IN LINUX

File System Type Description

cramfs Compressed ROM le system: A compressed read-only le system for

ROMs.

hpfs High Performance File System: The IBM OS/2 standard le system.

Only supported in read-only mode.

iso9660 Standard le system on CD-ROMs.

minix This le system originated from academic projects on operating sys-

tems and was the rst le system used in Linux. Today, it is used as

a le system for floppy disks.

msdos fat , the le system originally used by DOS, is today used by vari-

ous operating systems.

nfs Network File System: Here, data can be stored on any machine in a

network and access might be granted via a network.

ntfs WindowsNT le system; read-only.

smbfs Server Message Block is used by products such as Windows to enable

le access over a network.

27 Other Supported File Systems SLES 12 SP5

File System Type Description

sysv Used on SCO Unix, Xenix, and Coherent (commercial Unix systems

for PCs).

ufs Used by BSD, SunOS, and NextStep. Only supported in read-only

mode.

umsdos Unix on MS-DOS: Applied on top of a standard fat le system,

achieves Unix functionality (permissions, links, long le names) by

creating special les.

vfat Virtual FAT: Extension of the fat le system (supports long le

names).

1.9 Large File Support in Linux

Originally, Linux supported a maximum le size of 2GiB (2

31

bytes). Unless a le system comes

with large le support, the maximum le size on a 32-bit system is 2 GiB.

Currently, all our standard le systems have LFS (large le support), which gives a maximum

le size of 2

63

bytes in theory. Table1.2, “Maximum Sizes of Files and File Systems (On-Disk Format, 4

KiB Block Size)” offers an overview of the current on-disk format limitations of Linux les and le

systems. The numbers in the table assume that the le systems are using 4 KiB block size, which

is a common standard. When using different block sizes, the results are different. The maximum

le sizes in Table1.2, “Maximum Sizes of Files and File Systems (On-Disk Format, 4 KiB Block Size)” can

be larger than the le system's actual size when using sparse blocks.

Note: Binary Multiples

In this document: 1024 Bytes = 1 KiB; 1024 KiB = 1 MiB; 1024 MiB = 1 GiB; 1024 GiB

= 1 TiB; 1024 TiB = 1 PiB; 1024 PiB = 1 EiB (see also NIST: Prefixes for Binary Multiples

(http://physics.nist.gov/cuu/Units/binary.html) .

28 Large File Support in Linux SLES 12 SP5

TABLE1.2:MAXIMUM SIZES OF FILES AND FILE SYSTEMS (ON-DISK FORMAT, 4 KIB BLOCK SIZE)

File System (4 KiB Block

Size)

Maximum File System Size Maximum File Size

Btrfs 16 EiB 16 EiB

Ext3 16 TiB 2 TiB

Ext4 1 EiB 16 TiB

OCFS2 (a cluster-aware le

system available in the High

Availability Extension)

16 TiB 1 EiB

ReiserFS v3.6 16 TiB 1 EiB

XFS 8 EiB 8 EiB

NFSv2 (client side) 8 EiB 2 GiB

NFSv3/NFSv4 (client side) 8 EiB 8 EiB

Important: Limitations

Table1.2, “Maximum Sizes of Files and File Systems (On-Disk Format, 4 KiB Block Size)” describes

the limitations regarding the on-disk format. The Linux kernel imposes its own limits on

the size of les and le systems handled by it. These are as follows:

File Size

On 32-bit systems, les cannot exceed 2 TiB (2

41

bytes).

File System Size

File systems can be up to 2

73

bytes in size. However, this limit is still out of reach

for the currently available hardware.

29 Large File Support in Linux SLES 12 SP5

1.10 Linux Kernel Storage Limitations

Table1.3, “Storage Limitations” summarizes the kernel limits for storage associated with SUSE

Linux Enterprise Server.

TABLE1.3:STORAGE LIMITATIONS

Storage Feature Limitation

Maximum number of LUNs supported 16384 LUNs per target.

Maximum number of paths per single LUN No limit by default. Each path is treated as a

normal LUN.

The actual limit is given by the number of

LUNs per target and the number of targets

per HBA (16777215 for a Fibre Channel

HBA).

Maximum number of HBAs Unlimited. The actual limit is determined by

the amount of PCI slots of the system.

Maximum number of paths with de-

vice-mapper-multipath (in total) per operat-

ing system

Approximately 1024. The actual number

depends on the length of the device num-

ber strings for each multipath device. It

is a compile-time variable within multi-

path-tools, which can be raised if this limit

poses a problem.

Maximum size per block device Up to 8 EiB.

1.11 Troubleshooting File Systems

This section describes some known issues and possible solutions for le systems.

1.11.1 Btrfs Error: No space is left on device

The root ( / ) partition using the Btrfs le system stops accepting data. You receive the error

“ No space left on device ”.

30 Linux Kernel Storage Limitations SLES 12 SP5

See the following sections for information about possible causes and prevention of this issue.

1.11.1.1 Disk Space Consumed by Snapper Snapshots

If Snapper is running for the Btrfs le system, the “ No space left on device ” problem is

typically caused by having too much data stored as snapshots on your system.

You can remove some snapshots from Snapper, however, the snapshots are not deleted imme-

diately and might not free up as much space as you need.

To delete les from Snapper:

1.

Open a terminal.

2.

At the command prompt, enter btrfs filesystem show , for example:

tux > sudo btrfs filesystem show

Label: none uuid: 40123456-cb2c-4678-8b3d-d014d1c78c78

Total devices 1 FS bytes used 20.00GB

devid 1 size 20.00GB used 20.00GB path /dev/sda3

3.

Enter

sudo btrfs fi balance start MOUNTPOINT -dusage=5

This command attempts to relocate data in empty or near-empty data chunks, allowing

the space to be reclaimed and reassigned to metadata. This can take a while (many hours

for 1 TB) although the system is otherwise usable during this time.

4.

List the snapshots in Snapper. Enter

sudo snapper -c root list

5.

Delete one or more snapshots from Snapper. Enter

sudo snapper -c root delete SNAPSHOT_NUMBER(S)

Ensure that you delete the oldest snapshots rst. The older a snapshot is, the more disk

space it occupies.

To help prevent this problem, you can change the Snapper cleanup algorithms. See Book “Admin-

istration Guide”, Chapter7 “System Recovery and Snapshot Management with Snapper”, Section7.6.1.2

“Cleanup Algorithms” for details. The configuration values controlling snapshot cleanup are EMP-

TY_* , NUMBER_* , and TIMELINE_* .

31 Btrfs Error: No space is left on device SLES 12 SP5

If you use Snapper with Btrfs on the le system disk, it is advisable to reserve twice the amount

of disk space than the standard storage proposal. The YaST Partitioner automatically proposes

twice the standard disk space in the Btrfs storage proposal for the root le system.

1.11.1.2 Disk Space Consumed by Log, Crash, and Cache Files

If the system disk is filling up with data, you can try deleting les from /var/log , /var/crash ,

/var/lib/systemd/coredump and /var/cache .

The Btrfs root le system subvolumes /var/log , /var/crash and /var/cache can use all

of the available disk space during normal operation, and cause a system malfunction. To help

avoid this situation, SUSE Linux Enterprise Server offers Btrfs quota support for subvolumes.

See Section1.2.5, “Btrfs Quota Support for Subvolumes” for details.

On test and development machines, especially if you have frequent crashes of applications, you

may also want to have a look at /var/lib/systemd/coredump where the coredumps are stored.

1.11.2 Freeing Unused File System Blocks

On solid-state drives (SSDs) and thinly provisioned volumes it is useful to trim blocks not in use

by the le system. SUSE Linux Enterprise Server fully supports unmap or trim operations on

all le systems supporting these methods.

The recommended way to trim a supported le system (except Btrfs) on SUSE Linux Enter-

prise Server is to run /sbin/wiper.sh . Make sure to read /usr/share/doc/packages/hd-

parm/README.wiper before running this script. For most desktop and server systems the suffi-

cient trimming frequency is once a week. Mounting a le system with -o discard comes with

a performance penalty and may negatively affect the lifetime of SSDs and is not recommended.

Warning: Do Not Use wiper.sh on Btrfs

The wiper.sh script trims read-write mounted ext4 or XFS le systems and read-only

mounted/unmounted ext2, ext3, ext4, or XFS le systems. Do not use wiper.sh on the

Btrfs le system as it may damage your data. Instead, use /usr/share/btrfsmainte-

nance/btrfs-trim.sh which is part of the btrfsmaintenance package.

32 Freeing Unused File System Blocks SLES 12 SP5

1.11.3 Btrfs: Balancing Data across Devices

The btrfs balance command is part of the btrfs-progs package. It balances block groups

on Btrfs le systems in the following example situations:

Assume you have 1TB drive with 600GB used by data and you add another 1TB drive.

The balancing will theoretically result in having 300GB used space on each drive.

You have a lot of near-empty chunks on a device. Their space will not be available until

the balancing has cleared those chunks.

You need to compact half-empty block group based on the percentage of their usage. The

following command will balance block groups whose usage is 5% or less:

tux > sudo btrfs balance start -dusage=5 /

Tip

The /etc/cron.weekly/btrfs-balance script takes care of cleaning up unused

block groups on weekly basis.

You need to clear out non-full portions of block devices and spread data more evenly.

You need to migrate data between different RAID types. For example, to convert data on

a set of disks from RAID1 to RAID5, run the following command:

tux > sudo btrfs balance start -dprofiles=raid1,convert=raid5 /

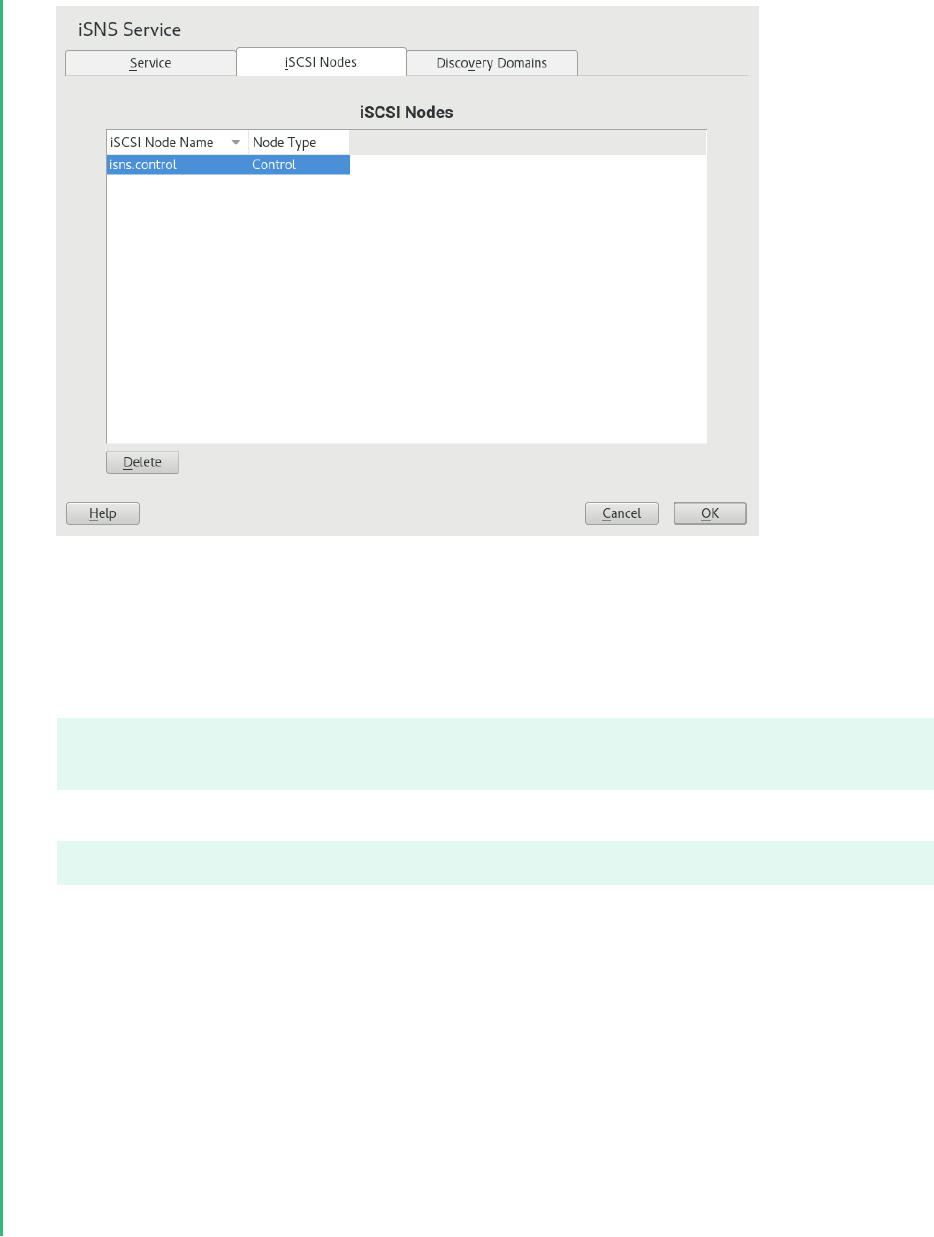

Tip